Configuration of Network Security Services

The virtual data center has native network security functionalities for virtual machines that are hosted in neoCloud. One of these functionalities is the perimeter security of the virtual data center provided by an Edge Gateway - a dedicated virtual appliance for the customer's virtual data center. The other functionality is distributed firewall which enables virtual machine level protection, even between virtual machines in the same network.

The Edge Gateway is connected to all routed networks in the virtual data center, where has a network interface and an IP address in the network, but is also connected to the internet where it has one or possibly more public IP addresses. In specific scenarios, customers may have multiple Edge Gateways for isolating different networks, connectivity with L2 links and other use cases. Each Edge Gateway has the following functions:

- Routing - routing of network traffic among networks that are connected to the Edge and between the networks and the internet. Except for routing on connected networks and internet, it enables configuration of static routes and dynamic routing protocols.

- Firewall - enables defining of rules which allow or deny specific network traffic among the networks connected to the Edge as well as between the networks and the internet.

- NAT - Network Address Translation - translation/masking of IP addresses on the networks towards internet (for example to gain internet access) or from internet to private IP addresses (for example to publish service on internet)

- DHCP - Dynamic Host Configuration Protocol - enables automated assignment of dynamic IP addresses to virtual machines in networks connected to the Edge from a configured range.

- VPN - Virtual Private Network - establishing an encrypted network tunnel between the virtual data center and another customer's location (headquarters office, branch office, etc.) over IPsec VPN and L2 VPN, or client computer over SSL VPN software client.

- Load Balancer - enables distribution of network or application traffic through two of more servers (virtual machines) in the virtual data center.

Distributed Firewall functionality enables firewall on the network adapter of virtual machines which is managed centrally and with ease. With it users can define allow and deny rules between virtual machines which are located in the same network. Additionally, with defining dynamic groups the user can configure security rules for virtual machine based on name or operating system, or unify security rule application by defining tags on virtual machines (for example applying same rules to application servers). The distributed firewall is an advanced functionality which is charged addionally and is available per user request.

Managing an Edge Gateway

Managing an Edge Gateway is perhaps the most complex and extensive segment of the virtual data center management. Because of this, the documentation only provides the basics which are necessary for most frequent and day-to-day management tasks on the Edge Gateway.

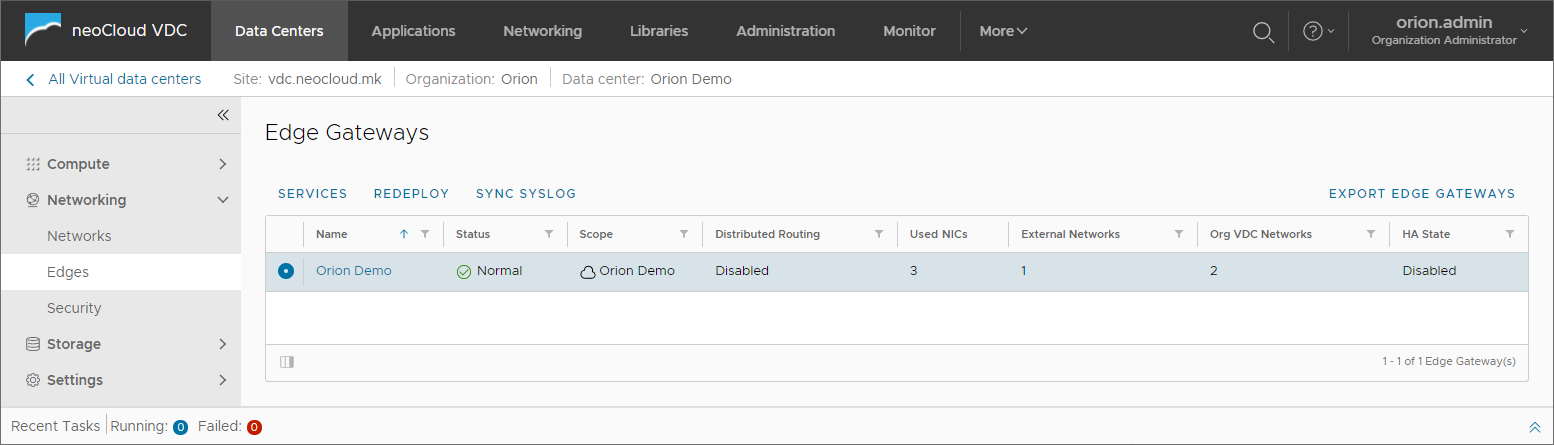

By choosing Edges from the left menu, the view for all available Edge Gateways in the virtual data center is opened. In the table for each Edge Gateway the following information are provided: the total number of used network interfaces, the number of external network and the number of internal networks that are connected to the Edge. One Edge Gateway may have a maximum of 10 network interfaces, meaning it may be connected to a total of 10 networks (both external and internal).

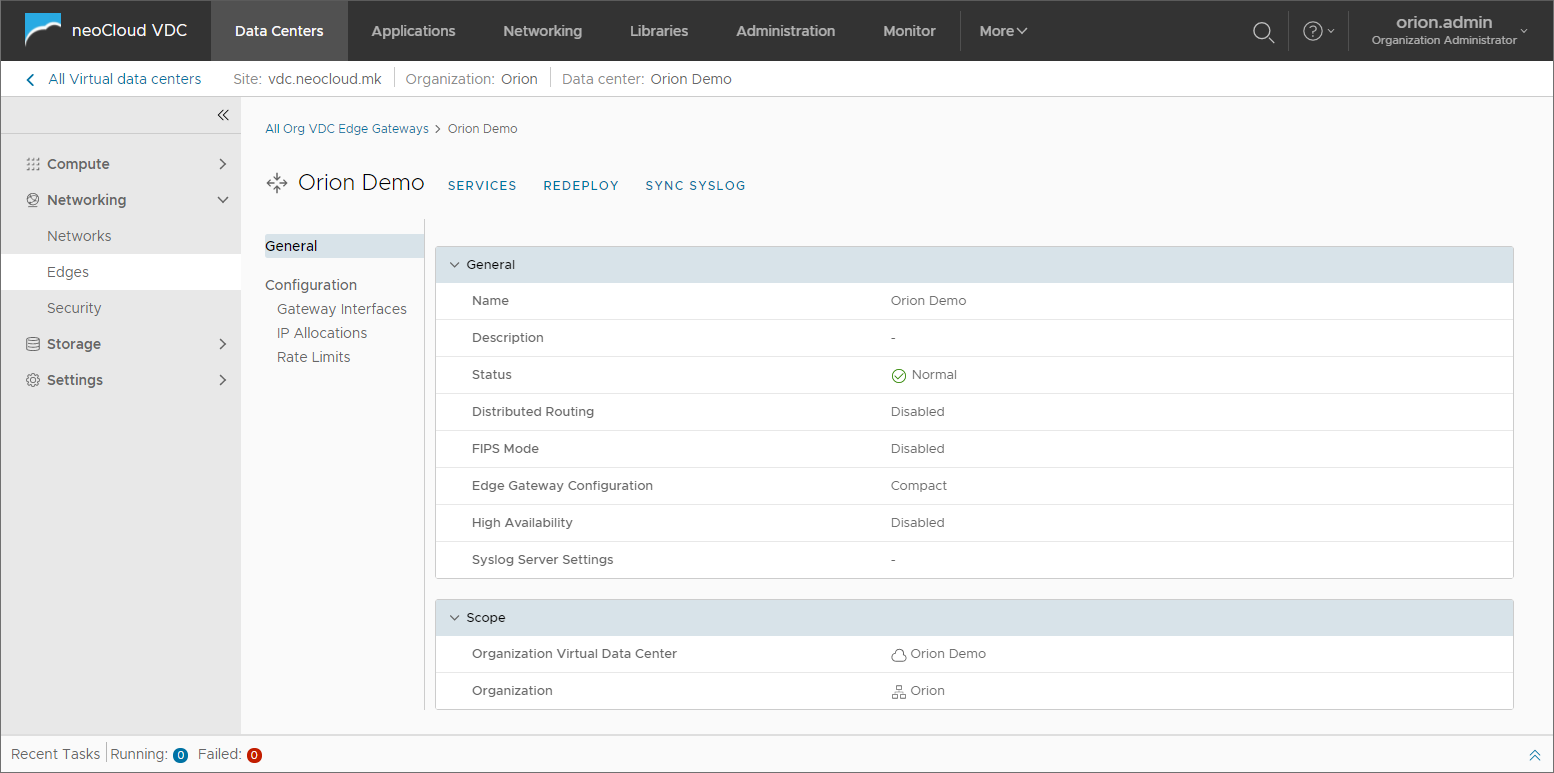

When one of the Edge Gateways is selected, additional information is displayed.

Additional information are available in the General section such as name, status, type i.e. size of the Edge appliance, if High Availability is enabled and other information.

The size defines the performance of the appliance and is determined by neoCloud based on the size of the virtual data center. If High Availability is enabled, two Edge appliances are placed on different physical servers on the infrastructure in order to achieve minimal network interruption (several seconds) in case the physical server fails. If HA is not enabled, the network interruption in the event of a physical server failure is typically between 2 and 5 minutes.

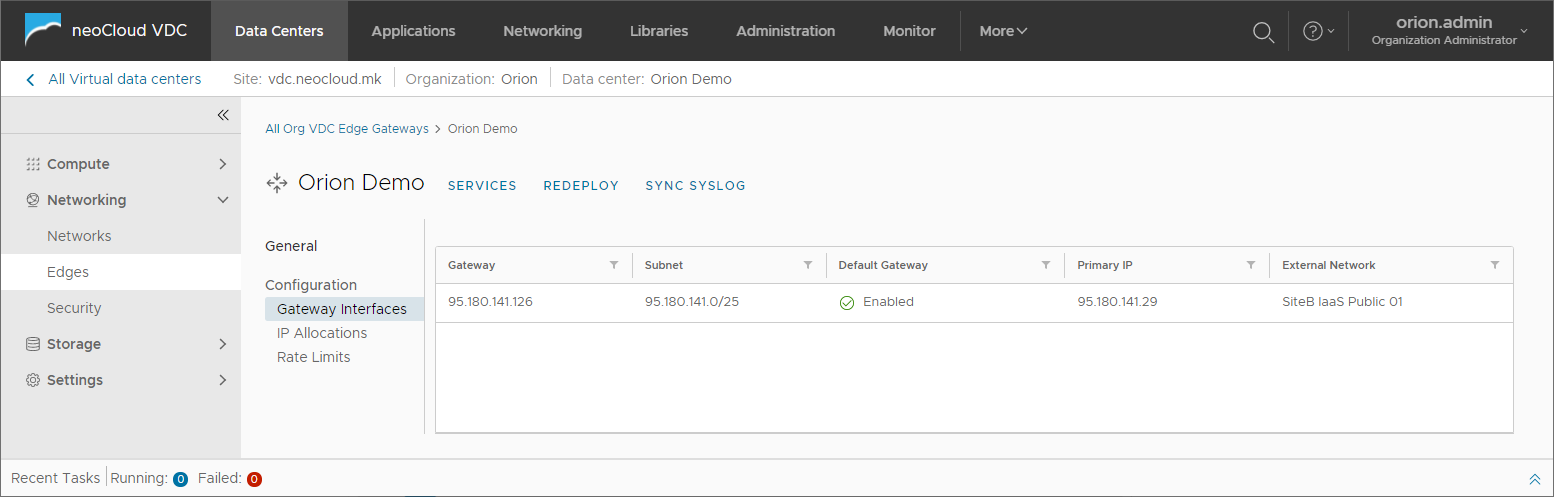

The Configuration section is divided into three subsections.

Gateway Interfaces is the first option showing public IP addresses where the Edge appliance is connected and the primary IP address for each of these interfaces. This address can be used for routing, application of firewall and NAT rules, VPN and Load Balancer configuration.

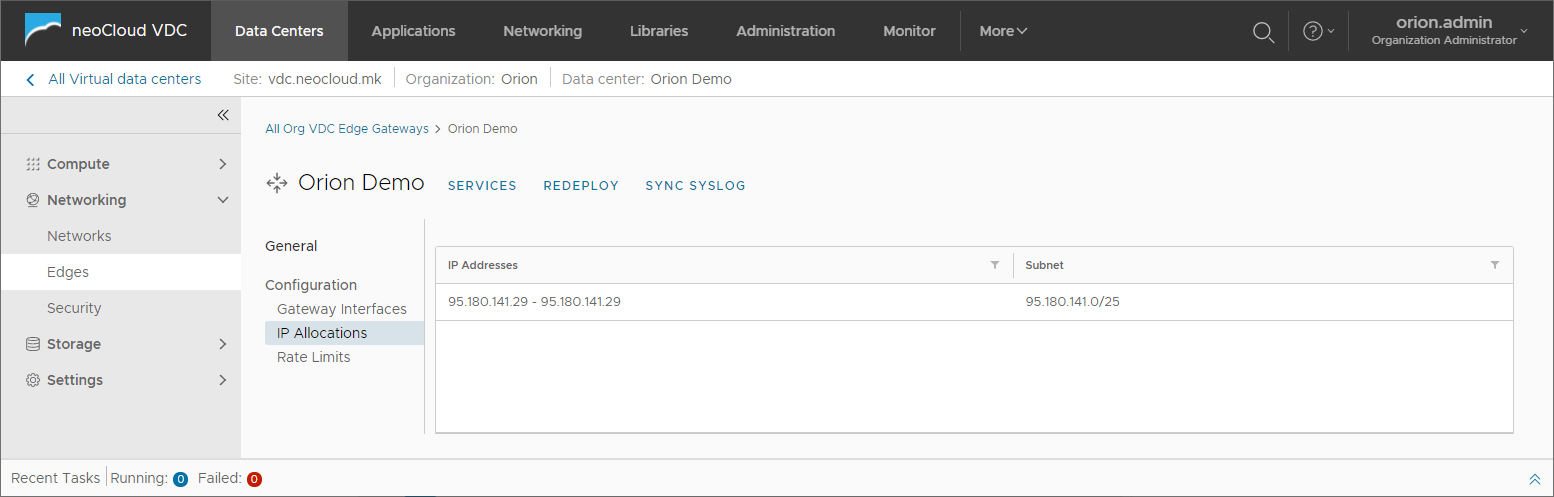

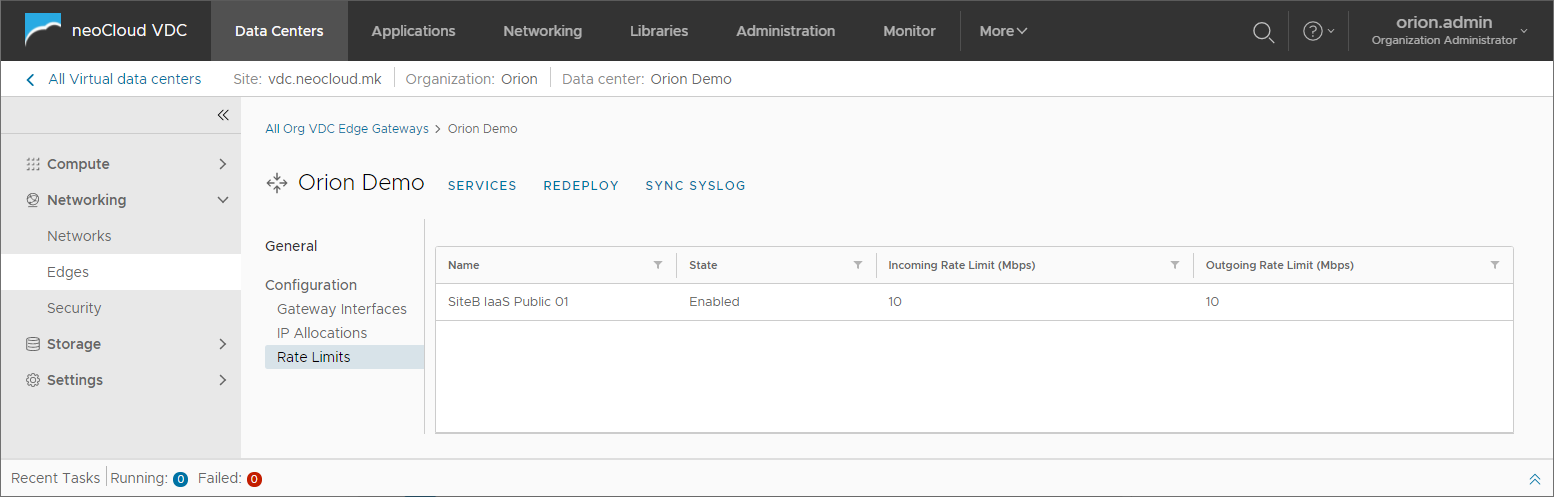

IP Allocations is the second option that shows additional IP addresses that are assigned to the Edge for the purpose of publishing additional services and functions provided by the Edge

The last option Rate Limits shows the defined network bandwidth available on all external interfaces on the Edge appliance.

Additional options for managing an Edge device are the Redeploy and Services options. The redeploy option is used in rare circumstances when there is an issue with the virtual appliance and deploys a new virtual appliance and applies the latest configuration. The same method is used when software upgrades are performed on the appliance, but this is done by neoCloud administrators. When Redeploying an Edge there is a network interruption of several seconds.

By clicking Services, the user can manage most of the configuration options of the Edge appliance, shown below in this document.

Firewall

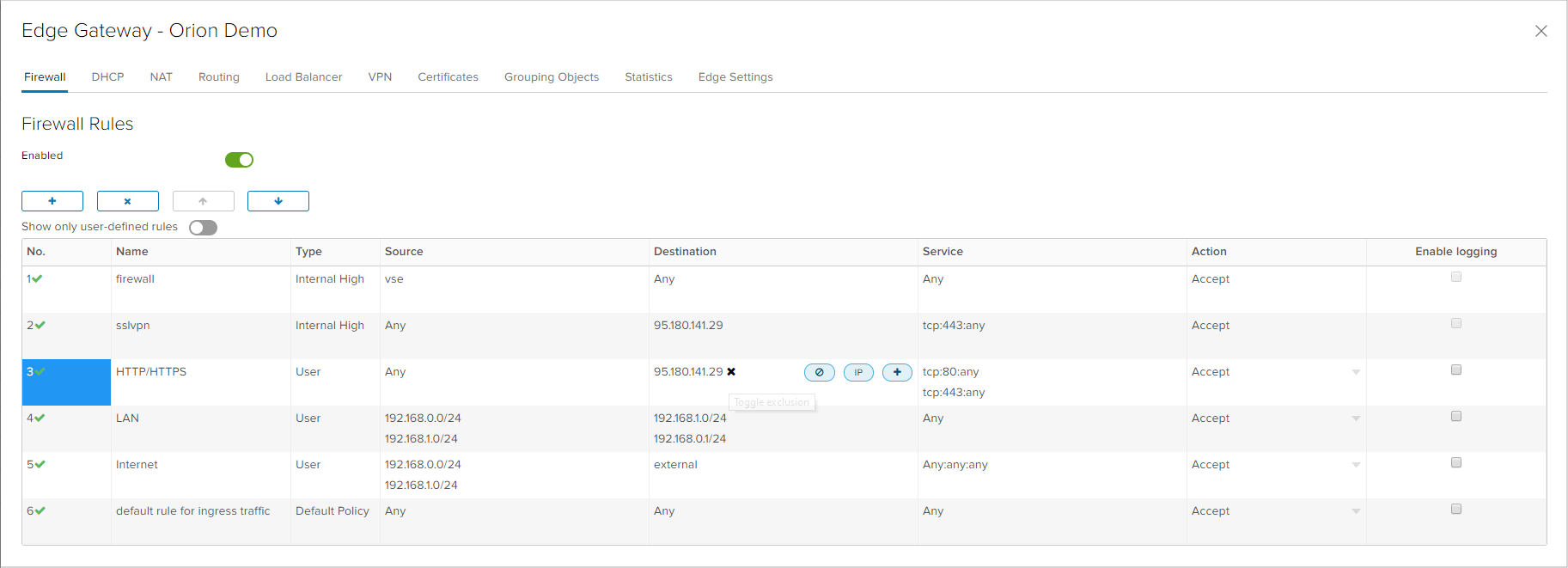

Above the main firewall rules table there are four options available: adding a new rule, deleting an existing rule and rule position change with up and down arrows. When adding a new rules, a new entry appears in the table where each cell is interactive and can be changed if the user positions the cursor.

The first column of the table shows the rule number. By clicking the rule number, the user can change the state of the rule - whether it will be enabled (green checkmark) or disabled (red x-mark). By clicking in the second column, the rule name can be altered. Third column shows if the rule is a system rule which is automatically generated by the Edge (Internal High) or defined by a user (User).

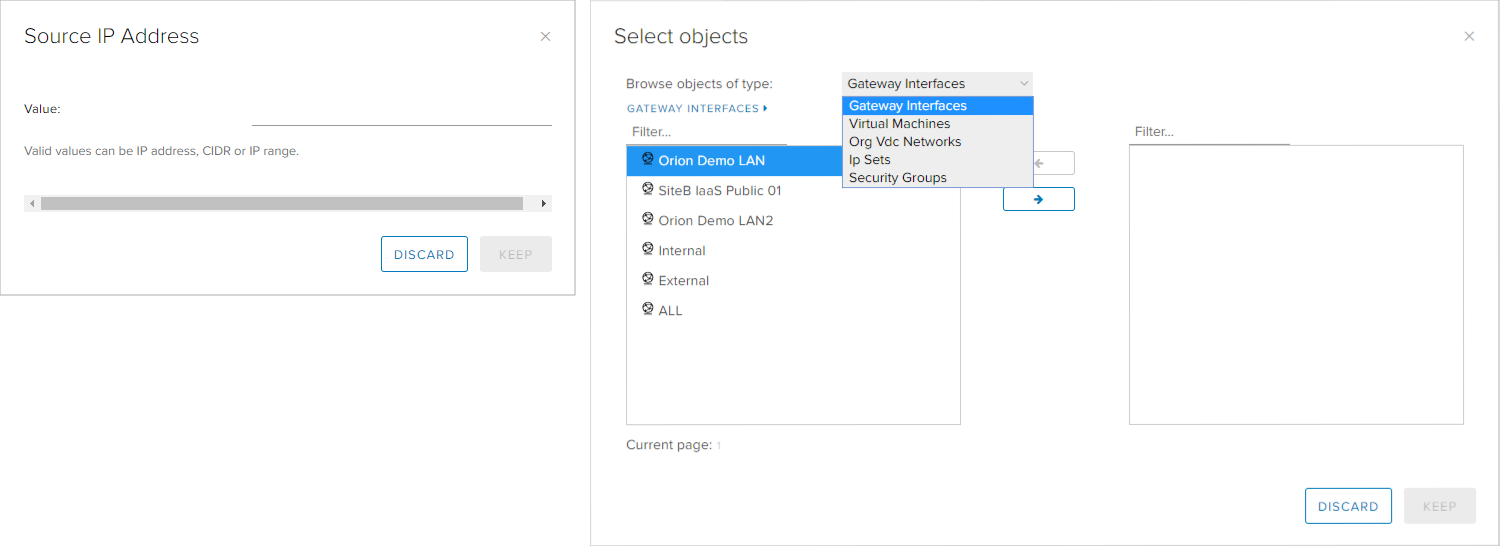

By positioning on the Source and Destination columns, three option are provided: object exclusion, adding an IP or an object with +. If the user chooses the IP option, and IP address, range or option any needs to be typed. Multiple IP addresses or ranges can exist in a single rule by clicking the IP option again, once the previous IP address is entered.

The option for adding objects allows for simplification in defining firewall rules. The user can select different objects such as network interface, virtual machines, organization networks, IP sets and security groups. The security groups and IP sets are explained in the Grouping Objects section on this page. If the user chooses the gateway interface objects, except for the networks that are connected on the Edge, the options Internal (all internal networks), External (all external networks) and ALL (all networks).

The sixth column, Services, defines the protocol and port that will be filtered by the rule. By positioning on the field, an option appears for adding protocol/port by clicking the + sign. In the windows that opens, the user needs to select one of the UDP, TCP, ICMP protocols or Any for all protocols, as well as the necessary Source and Destination port (when selecting UDP and TCP protocols).

The Actions column defines if the rule will allow traffic to pass (Accept) or will block traffic (Deny).

Deleting objects (such as IP addresses, objects, protocols and ports) is performed by clicking the X next to the object being deleted. After all necessary firewall are defined, the last step is to save the new configuration on the Edge Gateway, which is done by clicking on Save Changes on the yellow status bar that appears on the upper part of the window.

In the configuration shown in the next image, there are three rules where each serves a different purpose:

- Rule 3: allow traffic from any network to the public IP address on the Edge device on TCP protocol port 80 and 443, making the Edge pass HTTP and HTTPS traffic. In combination with an appropriate NAT rule, this can be used for publishing a web server.

- Rule 4: allow traffic between 192.168.0.0/24 and 192.168.1.0/24 networks on any protocol and any port, in both direction. This rule can be used for allowing all traffic between two networks in the virtual data center, but not recommended from security standpoint.

- Rule 5: allow traffic from networks 192.168.0.0/24 and 192.168.1.0/24 towards external networks (for example internet) on any protocol and any port. This rule can be used for allowing internet access on virtual machines in the networks in the virtual data center.

NAT

There are two types of translation rules that can be created in the NAT tab:

- SNAT (Source NAT) - a rule for translating a source address, typically used for translating an internal IP address or network into a public IP address. With SNAT, internal addresses are presented with the public IP address on the internet.

- DNAT (Destination NAT) - a rule for translating a destination address, typically used for translating a public IP address into a private IP address, where a specific port may be defined as well. With DNAT, a server with an internal IP address is published on the internet - either fully or on a specific port.

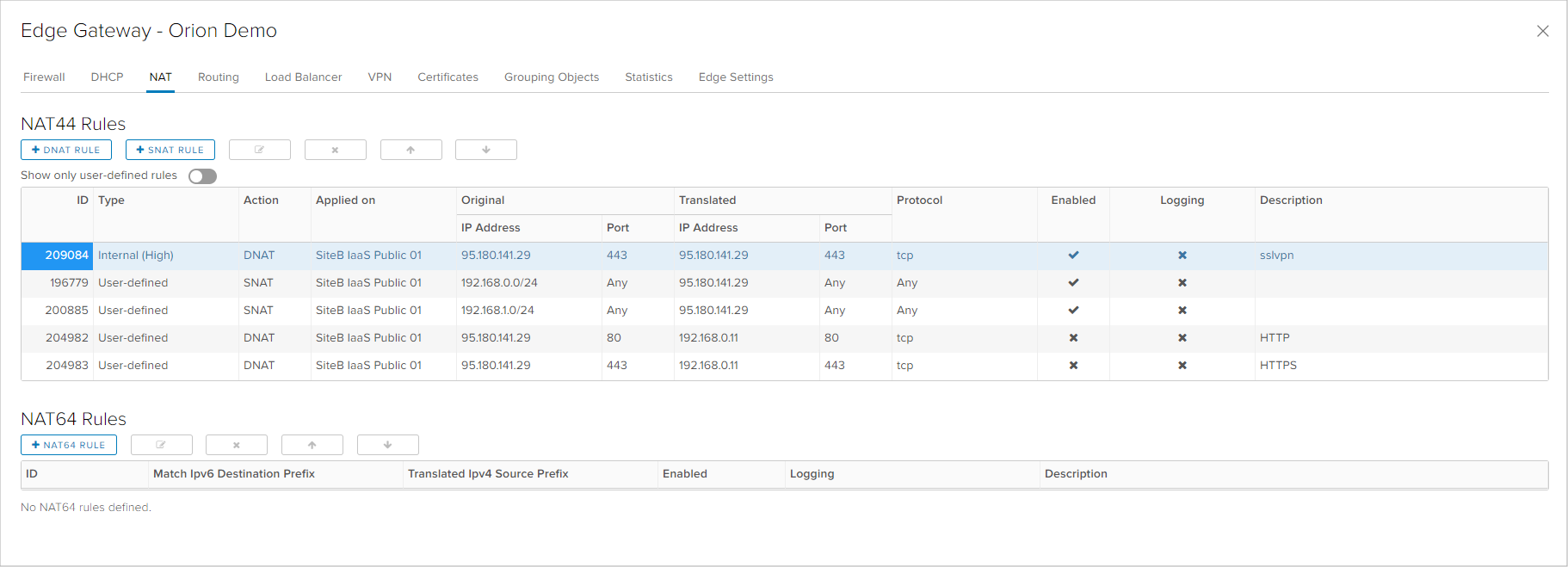

Next to the button for creating SNAT and DNAT rules, above the main NAT rules table are the option for editing, deleting or changing the order of existing rules. Just as in the firewall rules, there are Internal (High) system defined rules and User-defined rules.

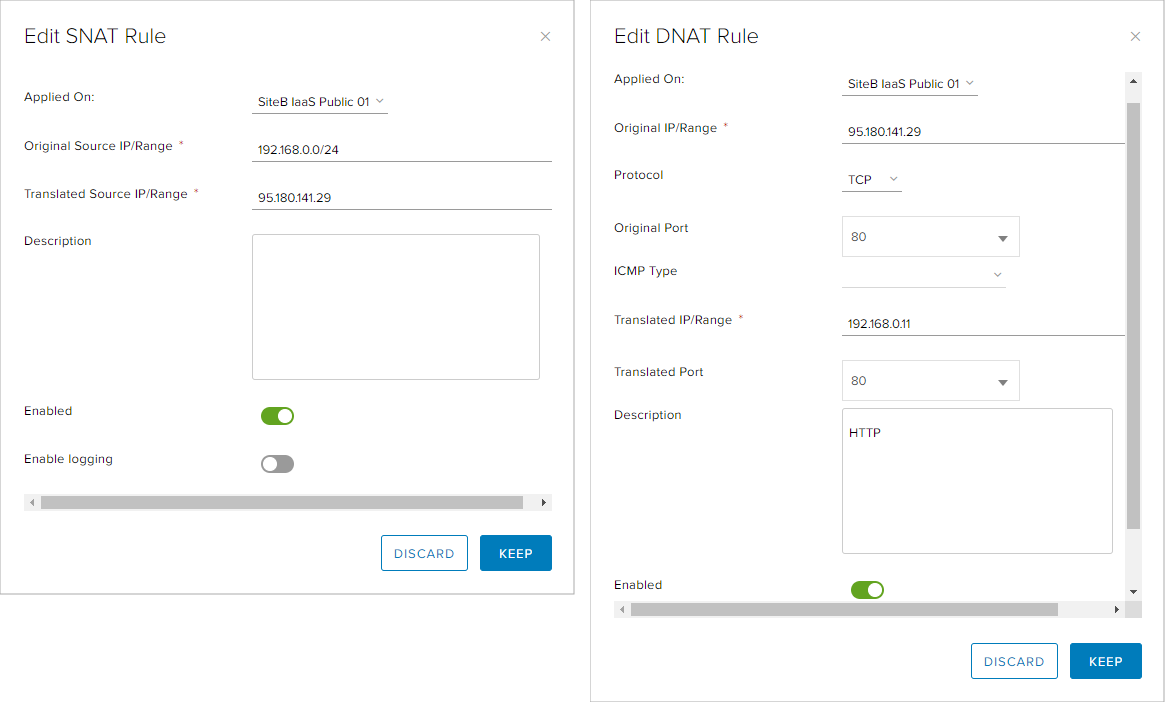

When creating or editing a SNAT rule, the user needs to define on which interface the rule will be applied, the source IP address or network, the translated IP address, the description of the rule and choosing whether to enable or disable the rule.

When creating or editing a DNAT rule, the user needs to define more parameters: the interface on which the will be applied, the original IP address and port, and the protocol. Next are the parameters for translated IP address, the translated port, as well as the description and enable/disable option.

In the configuration shown on the next image, there are four rules with the following purpose:

- Rule 2 and 3: SNAT rules which translate the internal networks 192.168.0.0/24 and 192.168.1.0/24 into an external IP address 95.180.141.29. In SNAT rules, protocol and port cannot be specified, so it applies on all traffic.

- Rule 4 and 5: DNAT rule which translates TCP traffic on port 80 and 443 from the public IP address 95.180.141.29 into the private IP address 192.168.0.11 on the same ports. This rule, combined with the firewall rule 3 enables a virtual machine with a private IP address to be available from the internet on HTTP and HTTPS.

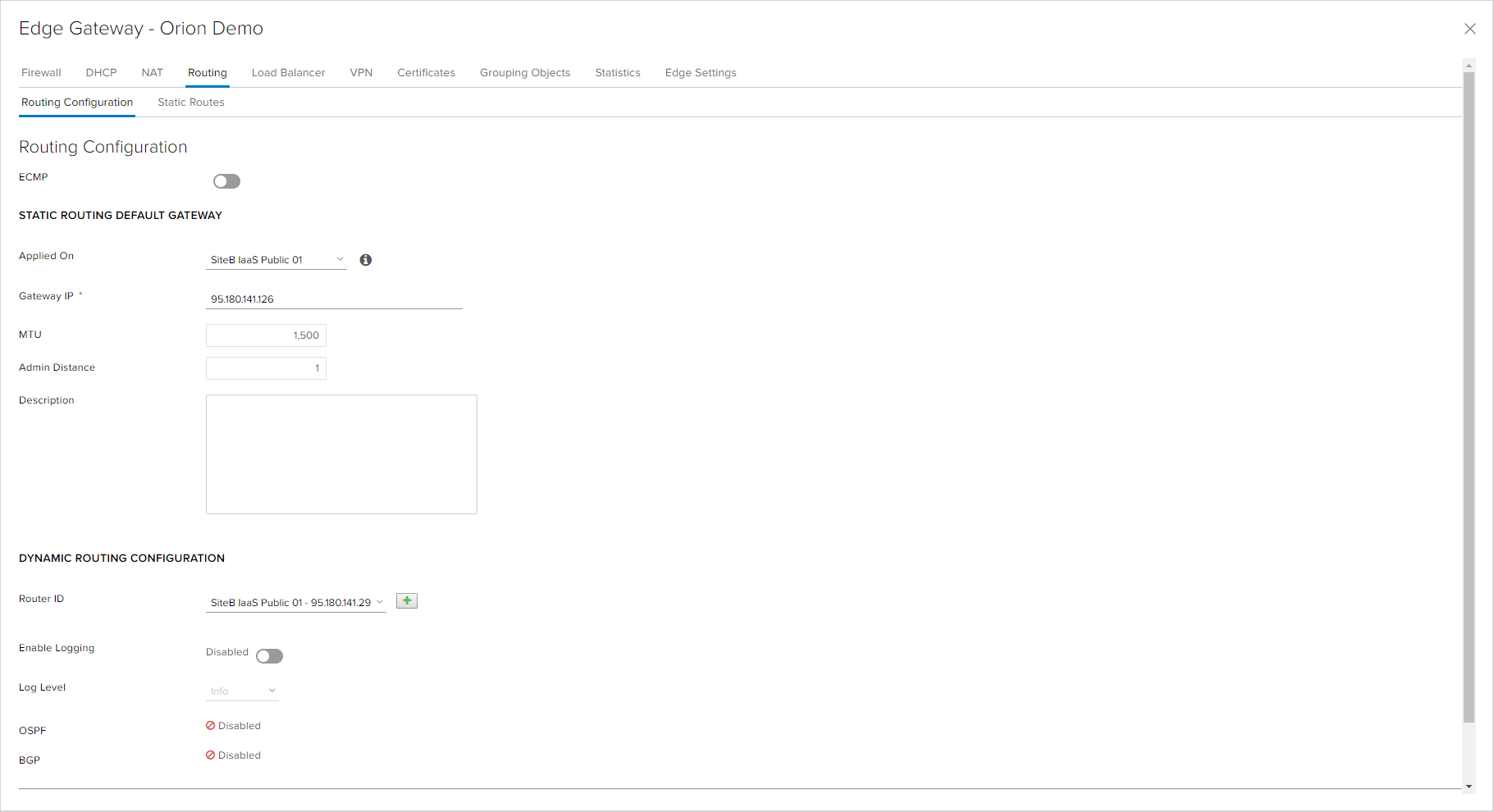

Routing

The Edge appliance enables routing between internal networks which are connected, i.e. networks which have a network interface on the Edge, as well as networks that are part of IPsec or SSL VPN configuration. This routing does not require additional routing and only firewall rules are required for allowing the necessary traffic between these networks. The routing towards internet is performed by setting the Default Gateway parameter on the Edge on the external network interface. This configuration is done automatically when provisioning the Edge appliance.

In the event of having more external networks connected to the Edge, were one is internet connectivity and another is for example an L2 connectivity with customer's other locations, the user needs to configure static routes. Static routes are configured in the Static Routes of the Routing tab of the Edge services window. To configure a static route, the following parameters are required:

- Network: a network to which traffic needs to be routed, for example the local network in a customer's offices over an L2 link.

- Next Hop: the next hop in the routing towards which traffic needs to be sent for the network traffic defined in the previous field. In case of an L2 link, the next hop is the router at the customer's location configured in the transport network (external network on the Edge for L2 link).

- Admin Distance: priority of the route in comparison to other roues. Because routing towards internet is with priority 1, static routes needs to be with another, lower priority. In routing, smaller numbers mean larger priority.

- Interface: a network interface on which the route is applied. In the case of an L2 link, the external network for the L2 link needs to be chosen.

Besides static routes, the Edge appliance allows for dynamic routing with two of the most utilized routing protocols - OSPF and BGP. Dynamic routing protocols have a wide range of use cases, but their application in the virtual data center are:

- If the customer has many location with L2 connectivity, especially if those location need to communicate between each other through the Edge appliance. With dynamic routing, the user can avoid configuring a large amount of static routes and when adding a new location of reconfiguring an existing location, dynamic routes are automatically distributed to all locations.

- If the customer has multiple L2 connections to the virtual data center (over one or more service providers) for redundant connectivity, providing minimal communication impact in the event of a link going down.

- If the user has its own public IP addresses with a dedicated AS number using the BGP protocol.

Dynamic routing cannot be configured by organization administrators. They are configured and managed by neoCloud administrators in correlation with the customer. Nevertheless, the customer has an overview of the dynamic routing protocol status, as shown in the previous image.

DHCP

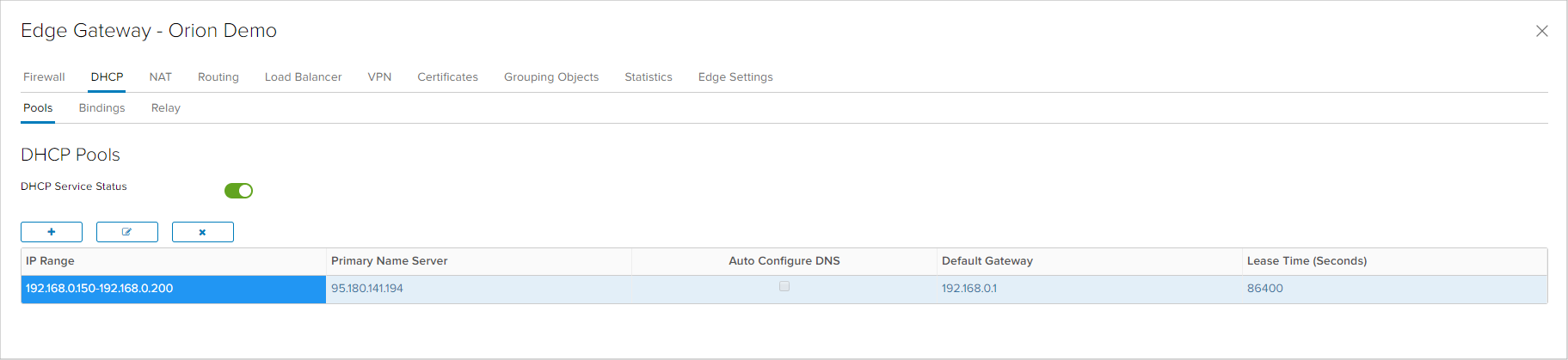

DHCP functionality enables automated assignment of dynamic IP addresses to virtual machines in the virtual data center. When configuring DHCP, first it is necessary to enable the DHCP service in the field DHCP Service Status. For creating a new DHCP configuration, it is necessary to create a new Pool, where some parameters need to be set: the address space that requires DHCP and parameters which the DHCP clients will receive from the server (Domain Name, DNS сервер, Default Gateway, Subnet Mask and Lease Time).

The DHCP functionality allows for two additional features - Binding and Relay. With Bindings, the user can define a reservation of IP addresses from DHCP on specific MAC address of a virtual machine network adapter. Relay serves for forwarding the DHCP requests for an IP address to another DHCP server, allowing the customer to utilize an existing DHCP infrastructure for assignment of IP addresses in the virtual data center.

VPN

IPsec VPN enables establishing an encypted tunnel between two network location, over internet (supporting static and dynamic public IP addresses) or over L2 connectivity increasing security in the network communication. For IPsec VPN, both/all location participating need to have different local IP addresses and non-overlapping IP addresses in the entire customer's infrastructure. IPsec VPN can be completely configured and managed by the customer in the Edge appliance services interface.

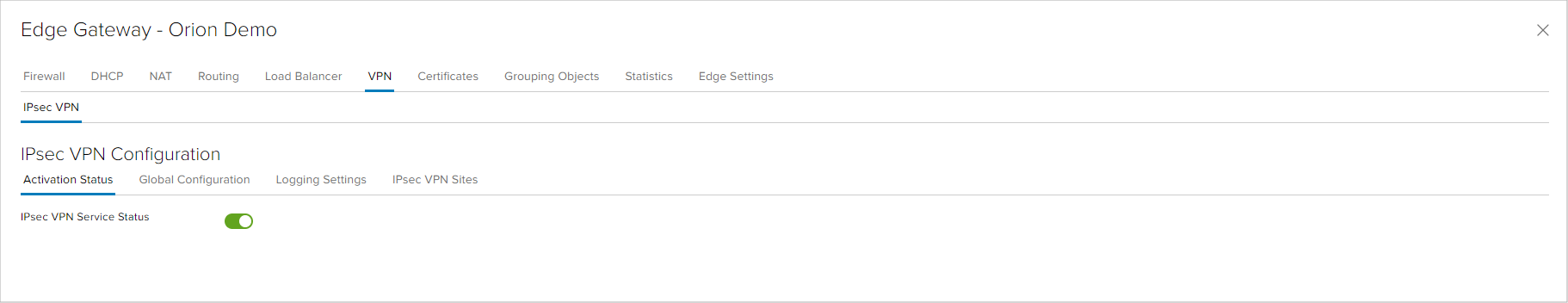

The first step is to enabled the IPsec VPN service.

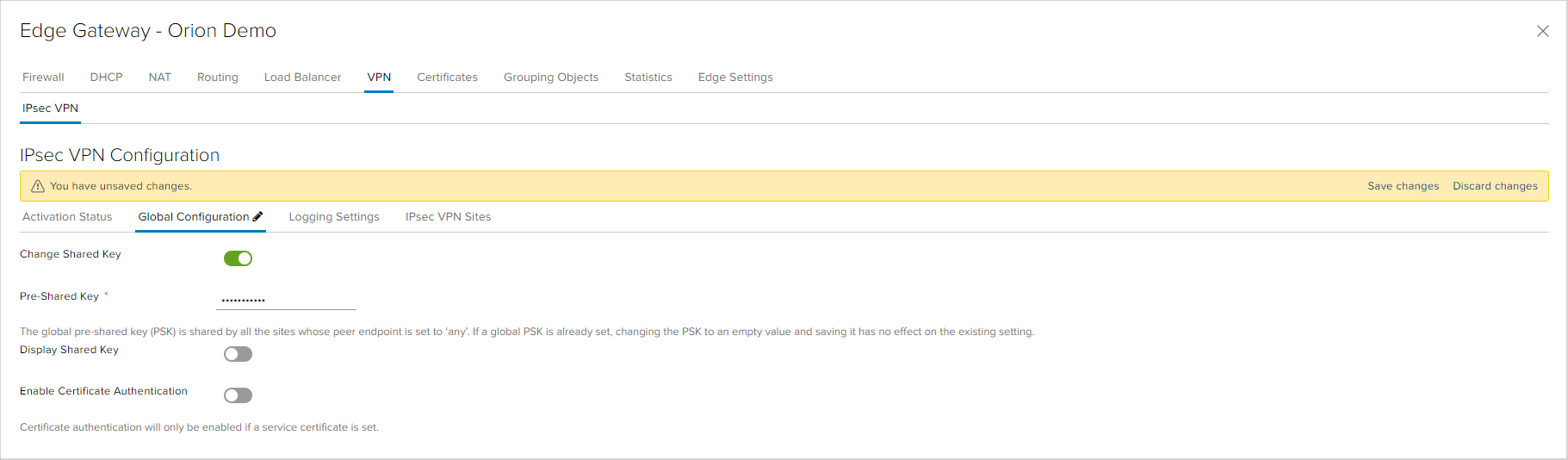

If the IPsec VPN is established with a peer that has dynamic IP address, entering a global pre-shared key in the Global Configuration tab is required. Except for this option, in this tab the user can enable the option for certificate authentication in VPN links.

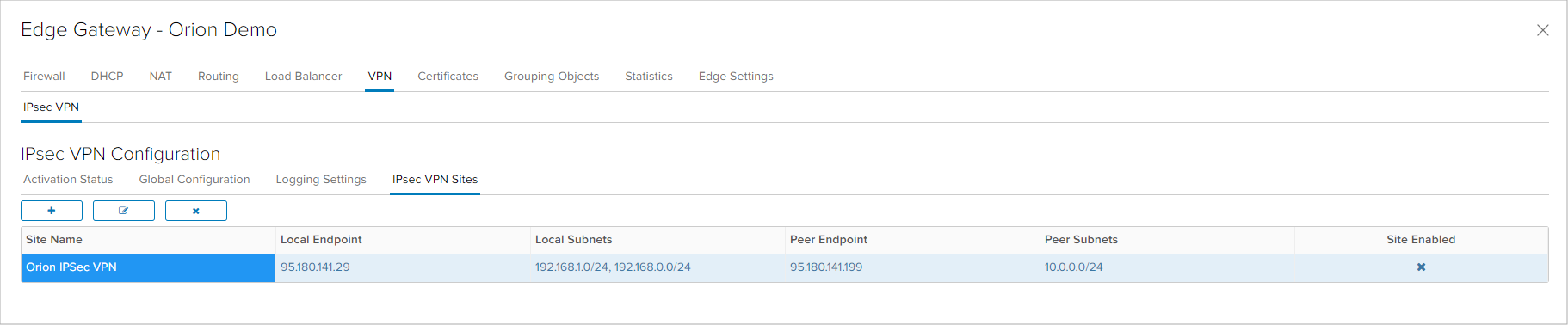

In the last tab of the VPN windows of the Edge appliance service interface is the configuration of IPsec VPNs. Each location with which an IPsec VPN will be configured needs to be defined as a Site.

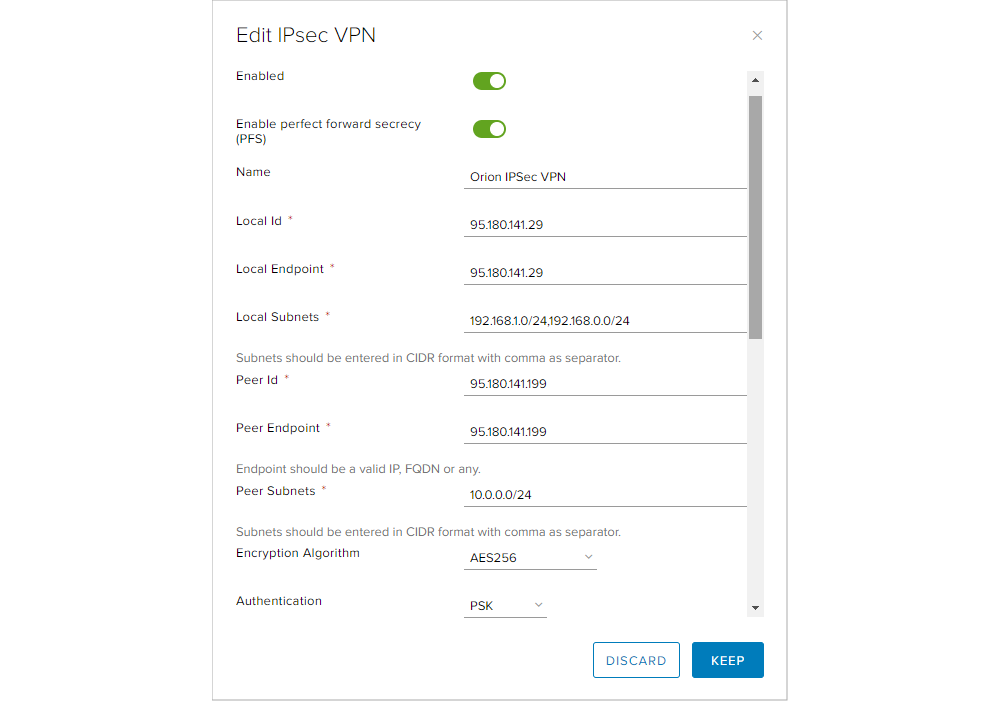

When creating an IPsec VPN site, the following parameters need to be specified:

- Enabling Perfect Forward Secrecy (PFS) for increased security in encryption key exchange.

- Name: Name of the IPsec VPN connection.

- Local ID: Identification of the Edge appliance in the connection. Usually the Edge public IP address is used, but in IPsec VPN connection where the peer does not have a static IP address, hostname is used.

- Local Endpoint: The public IP address that is used for the connection.

- Local Subnets: local virtual data center networks that need to be routed in the IPsec VPN.

- Peer ID: Identification of the other device (peer) in the connection. When connecting to a device with a static IP address, that address is used or a hostanem when the other device has a dynamic public IP address.

- Peer Endpoint: static public IP address of the other device or any when the public IP address is dynamic.

- Peer Subnets: remote location local networks that need to be routed in the IPsec VPN.

- Encryption Algorithm: choice between AES, AES256 or AES-GCM encryption algorithms.

- Authentication: choice between Pre-Shared Key (PSK) or Certificate authenication.

- Pre-Shared Key: If configuring an IPsec VPN with a static public IP address, PSK needs to be entered. The global PSK is used only in IPsec VPNs with dynamic public IP addresses.на IP адреса.

- Diffie-Hellman Group: choice between DH2, DH5, DH14, DH15 and DH16.

- Digest Algorithm: choice between SHA and SHA256.

- IKE Option: choice between IKEv1, IKEv2 and IKEFlex (attempts to establish over IKEv2, reverts to IKEv1 if IKEv2 is unsuccessful).

- IKE Responder Only: defines if the Edge device will be initiator or only responder of IKE protocol.

- Session Type: choice between Policy based session and Route based session. With Policy based, local networks need to be defined as interfaces on the Edge appliance, while in Route based a Virtual Tunnel Interface (VTI) is created for each network that needs to be established in the IPsec tunnel.

L2 VPN provides establishing an encrypted tunnel between two locations over internet or over an L2 link by expanding the same network subnet between the two location. L2 VPN enables migration of services in the virtual data center without ever changing the IP addresses of the servers, implementing new connectivity or routing or defining new firewall rules. Configuration of L2 VPN is performed by neoCloud administrators, but except for the required Edge configuration, it is necessary to install and configure a component in the customer's VMware vSphere virtual environment.

SSL VPN provides connectivity of client computers directly into the virtual data center over an encrypted tunnel. A SSL VPN client is installed directly on the end user's computer, where Windows,m Linux and MAC operating systems are supported. The SSL VPN configuration is performed by neoCloud administrators in coordination with the customer for defining user profiles and network where the clients connect. Access to virtual machines in the virtual data center can be limited with firewall rules. Additionally, the SSL VPN service allows LDAP configuration, so if the customer has an LDAP (or Active Directory) in the virtual data center, the user credentials from the customer's infrastructure can be utilized.

Load Balancer

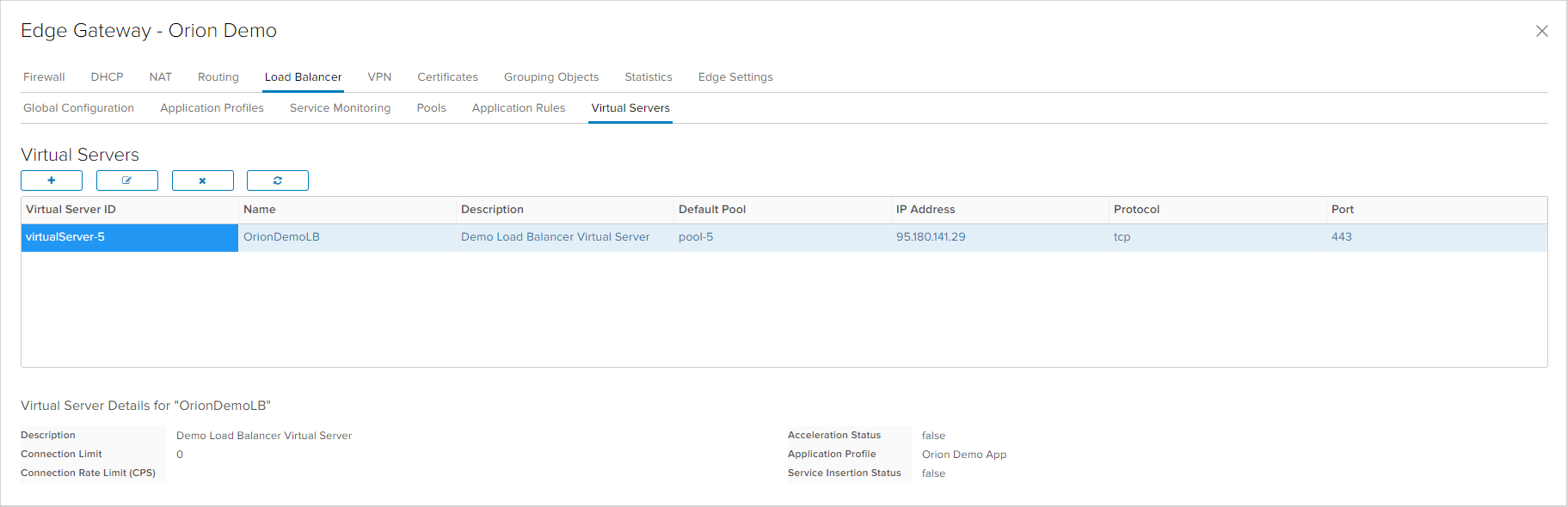

This service of the Edge appliances provides distribution of application traffic that communicate on TCP, UDP, HTTP or HTTPS protocols. The Load Balancer works by 'listening' for defined traffic are forwards the traffic to two or more servers that serve the application sessions.

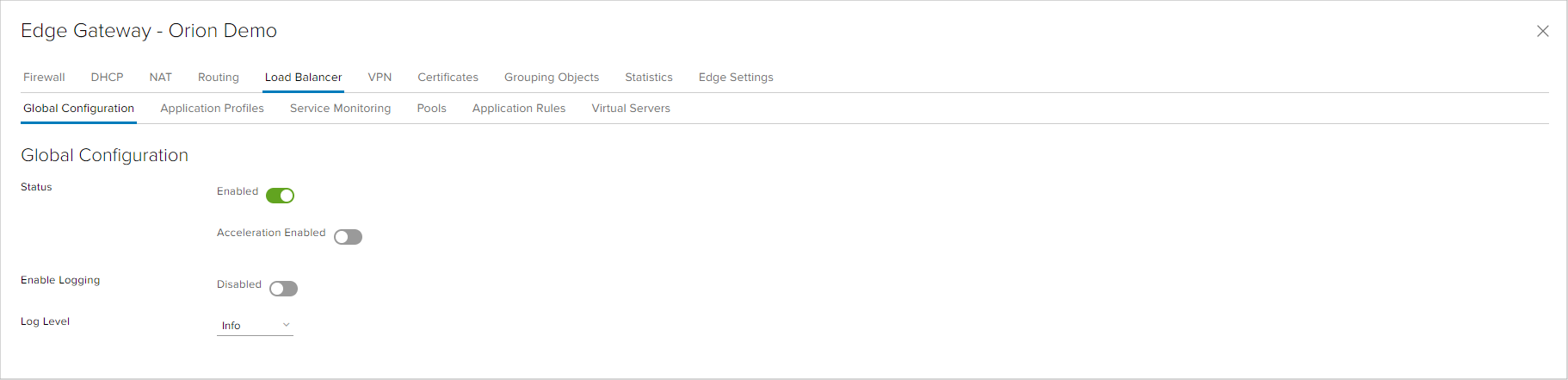

Initially, the service needs to be enabled in the global configuration of the Load Balancer service.

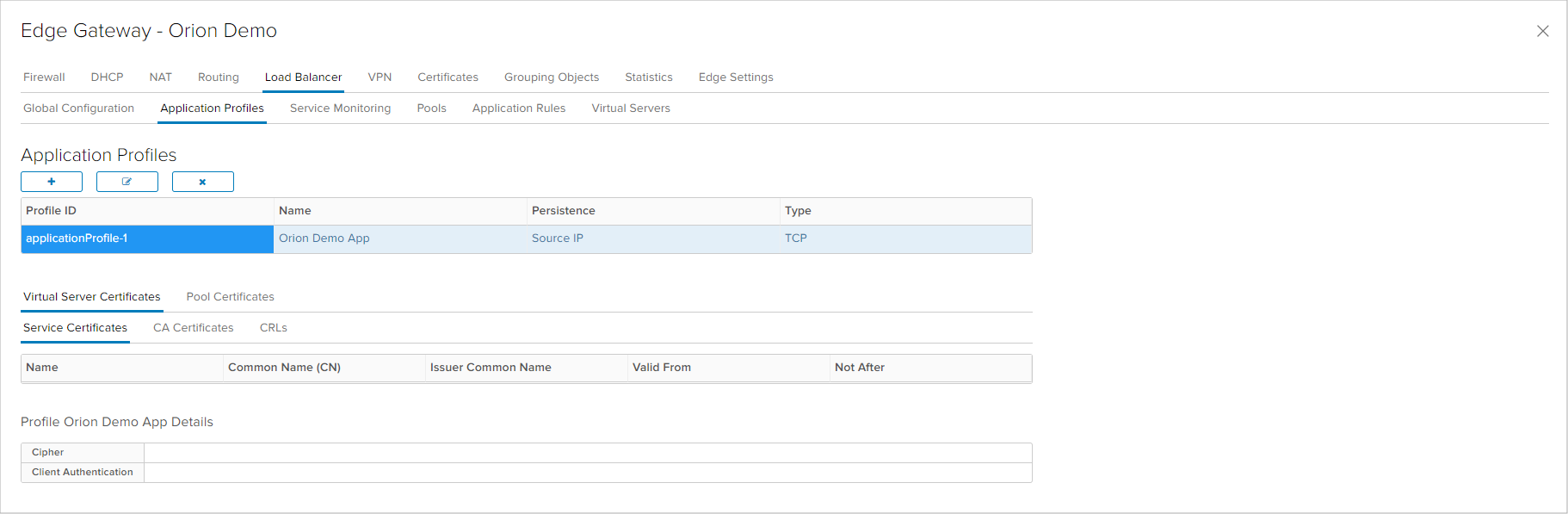

Application Profiles is the first step in configuring a Load Balancer, where the application type is specified by selecting the protocol (TCP, UDP, HTTP or HTTPS) and the session persistence method. In most configurations, Source IP is the most appropriate session persistence method. With HTTPS application, the suer can configure parameters related with the application certificate which can be installed on the Edge appliance or enable SSL Passthrough for terminating the SSL connection on the application server.

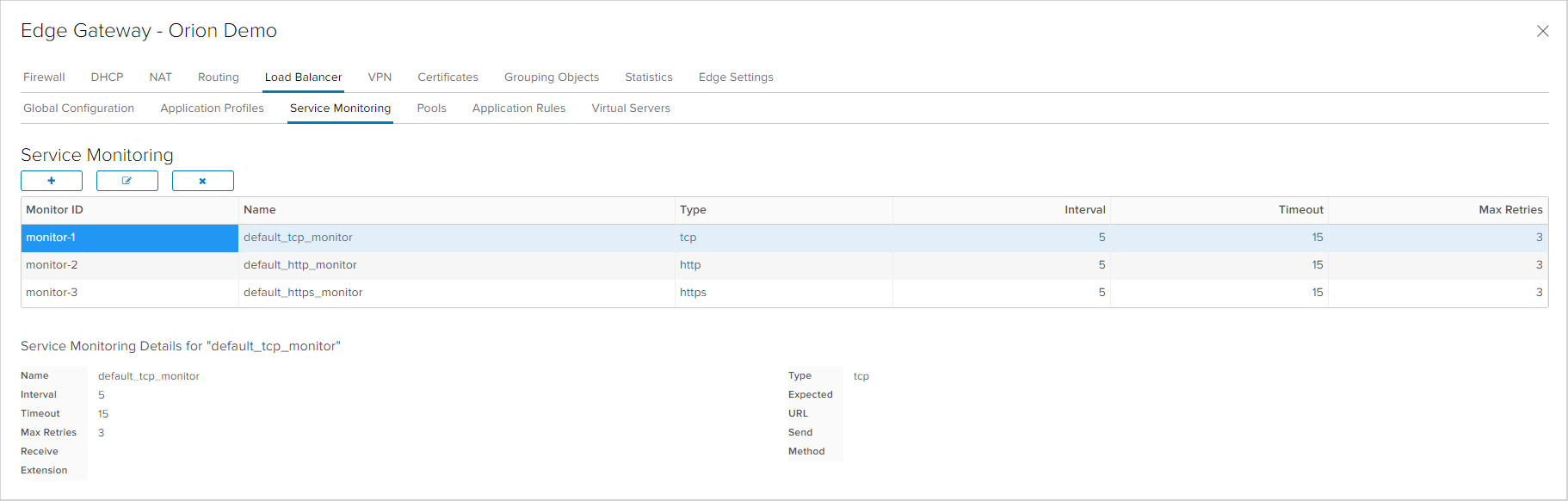

The Service Monitoring tab specifies monitoring of the application that will be Load Balanced. By this monitoring, the Load Balancer decides if the application server is active and can accept session on order to forward traffic to that server. In the default configuration, three monitors are predefined for TCP, HTTP and HTTPS traffic. With HTTP and HTTPS monitoris there are more option that define the expected http response from the server and not only if the server 'listens' on the ports.

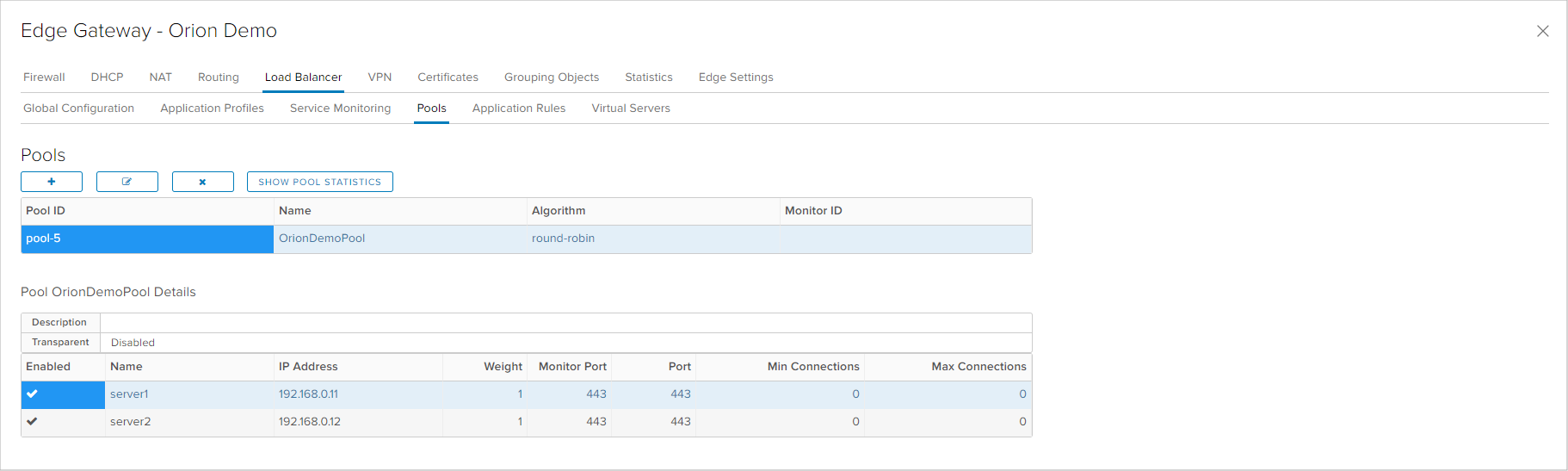

Servers that are behind the Load Balancer are defined in the Pools tab, as well as the session forwarding algorithm. One of the following algorithm can be chosen:

- Round Robin is an algorithm where session forwarding is determined by selecting the next member in the list of members

- IP Hash is an algorithm where session forwarding decision is made through a hash calculation of the session's source and destination IP address

- Least Connected algorithm maintains a list of active session for each member and sends new sessions to the member with least active connections

- URI (Uniform Resource Identifier), an algorithm only available in HTTP service, hashes the left part of the URI and divides by the total weight of the total active members to determine the member. This algorithm allows an URI to be forwarded to the same member, as long as that member is active.

- HTTPHEADER looks for information in the HTTP header for an algorithm parameter on which specifies the member that should take over the session. If no header is present, round robin is used.

- URL parameter specified in the argument is looked up in the query string of each HTTP GET request and through hashing, the member is determined. This algorithm allows tracking of user identifiers so that same user ID is sent to the same member, as long as that member is active. If no parameter is found, round robin is used.

In the Members table, at least two members that compose the Load Balancer group need to be entered, where the user needs to define the IP address, the weight and traffic forwarding and monitoring ports for each member.

In Application Rules, the user can configure scripts as application rules. Detailed information on the supported scripts and formats can be obtained in the official documentation.

The lst step of configuring the Load Balancer is to define the Virtual Server where all previously defined parameters are linked (Application Profiles and Default Pool), the protocol is chosen and the port on which the virtual server listens to. Another crucial parameter is the Edge appliance IP address which will accept the session traffic that needs to be forwarded, which can be one of the available public IP addresses or an internal IP address which is defined as an interface on the Edge appliance.

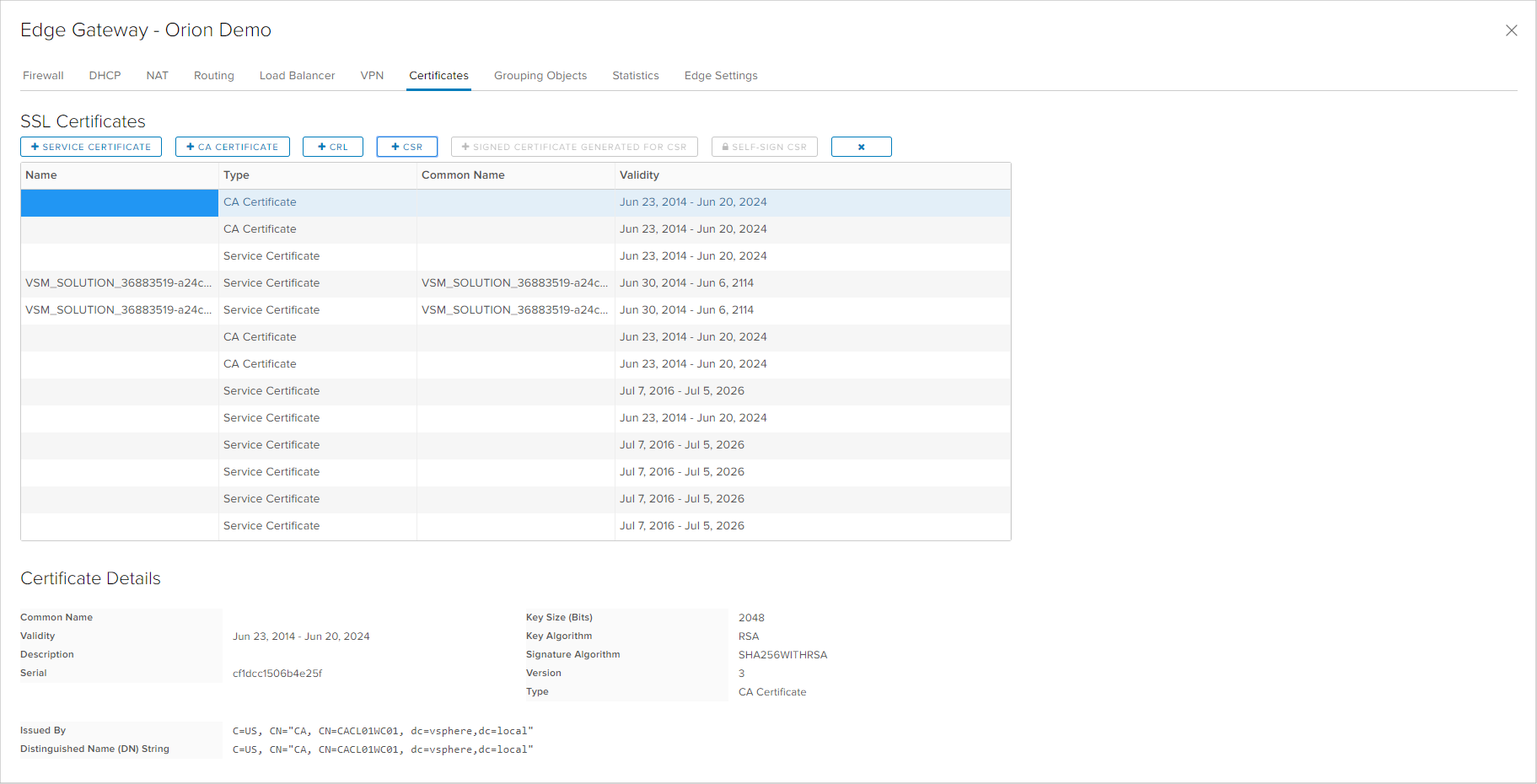

Certificates

The Edge appliance can use Certificates for the Load Balancer and the VPN services. each Edge appliance has internally generated self sighed certificates, but customers can use certificates issued from a public or internal Certificate Authority.

In order to use certificates issued from a Certificate Authority, the user needs to import the certificate as a Service Certificate, the Certificate Authority certificate as a CA Certificate nad optionally the list of revoked certificates as a CRL. Additionally, the Edge appliance provides an option to generate a Certificate Signing Request which can be used to generate a certificate from the Certificate Authority. When creating the Certificate Signing Request, it is necessary to enter all parameters that the certificate needs to contain.

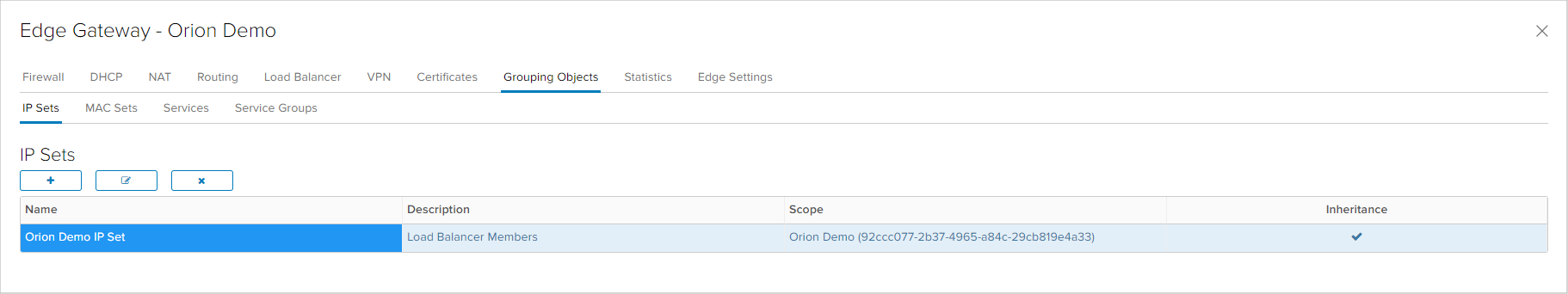

Grouping Objects

The Grouping Objects tab contains objects that can be used in some of the Edge services, such as Firewall and NAT, providing simplified management of the configuration. IP Sets, MAC Sets, Services and Service Groups can be created as Grouping Objects Табот Grouping Objects содржи објекти кои може да се користат во некои од сервисите (како Firewall, NAT и слично) со цел поедноставно управување со конфигурацијата на Edge уредот. Како Grouping objects може да се креираат IP Sets, MAC Sets, Services и Service Groups.

IP Sets and MAC Sets are objects created by users and contain lists or ranges of IP or MAC addresses, while Services and Service Groups are predefined.

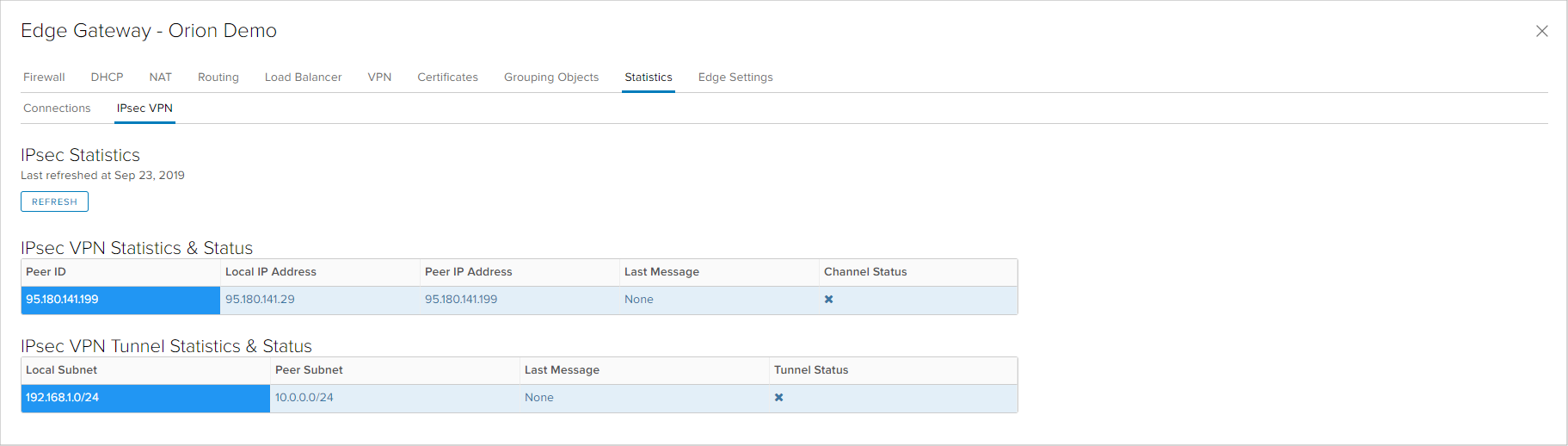

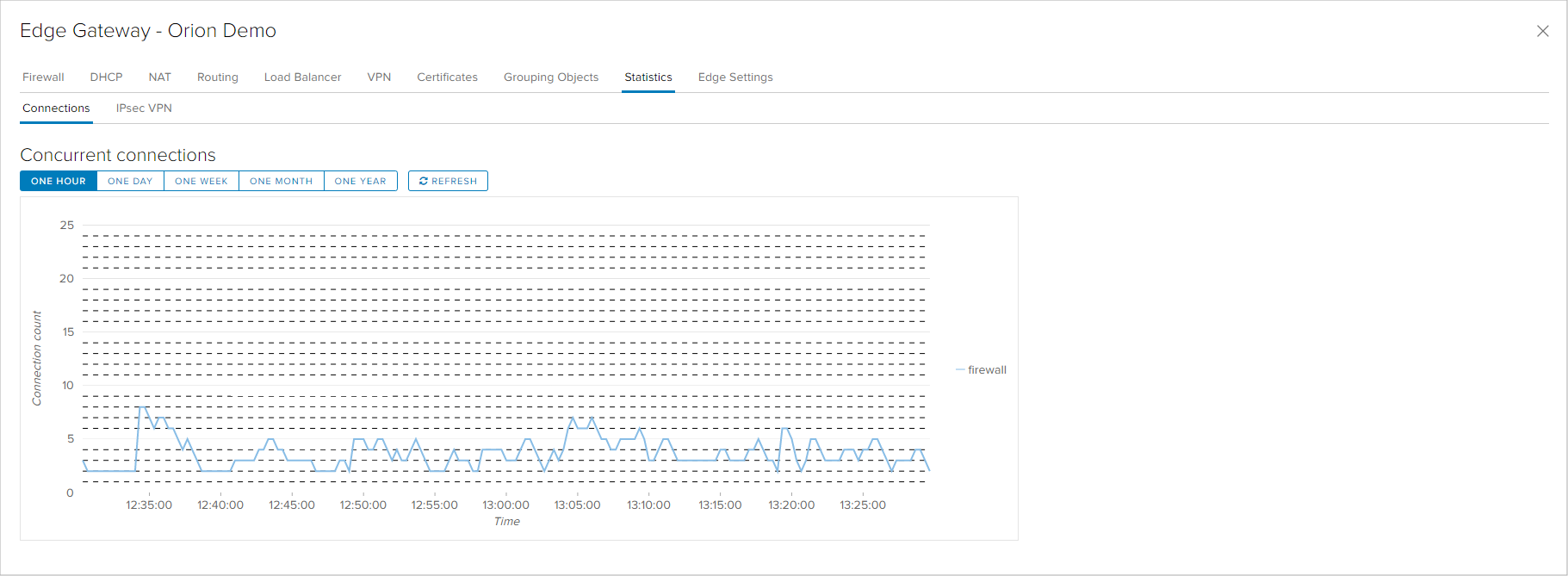

Statistics

In the Statistics tab of the Edge appliance there are two types of statistical information that can provide status preview or assist in diagnostics. One option is a graph of the number of active connections on the Edge appliance.

The other option is statistical information and status of the IPsec VPN connections and tunnels.